Viewed 18618 times | words: 1032

Published on 2019-12-30 | Updated on 2021-04-29 19:00:00 | words: 1032

If data is everywhere and everyone is both a consumer and a producer, everyone will be able to contribute to continuous improvement of services, products, and, yes, societies built around data.

Pending the development of Artificial Intelligence smart enough to allow, say, to utter (just to name one): "Alexa, please let me know which data are stored by Amazon, and alert me if somebody outside those that I added to my 'guest data list' visits it", and then combine with, say, "Alexa, please check how do I benchmark in my neighborhood in terms of trash recycling and water consumption"...

...we are stuck with computers, software, data scientist, and the like.

Anyway, for knowledge that has to be published online due to a law or regulation, or that is already published in other formats, there is no reason whatsoever that makes compulsory to use "technical" (business included, not just computer professionals) to access the information.

Decades ago designed and applied on a test case with a partner (to design a service that then did not start- it was mid-1990s, too early) a platform to enable subscription to knowledge.

In my activities, often had to transfer knowledge and, beside when we used Lotus Notes in the early 1990s, multimedia and web pages in the late 1990s, most actually asked only for printed documents- or their Acrobat version.

But there are better ways to make information accessible.

In August 2018, shared with few colleagues and friends, as a "beta test", a page and website located at https://robertolofaro.com/BFM2013tag (currently offline), to use my own BusinessFitnessMagazine.com as a sample (specifically, a book that you can find at https://robertolofaro.com/BFM2013, reprint of my 2003-2005 online quarterly magazine on cultural/organizational/technological change).

Sample of what? Of navigation through what an occasional user might be interested into- I read continuously, and save files, buy books, buy e-books, etc...

...but whenever I have to search a reference or a quote, it is often a nightmare.

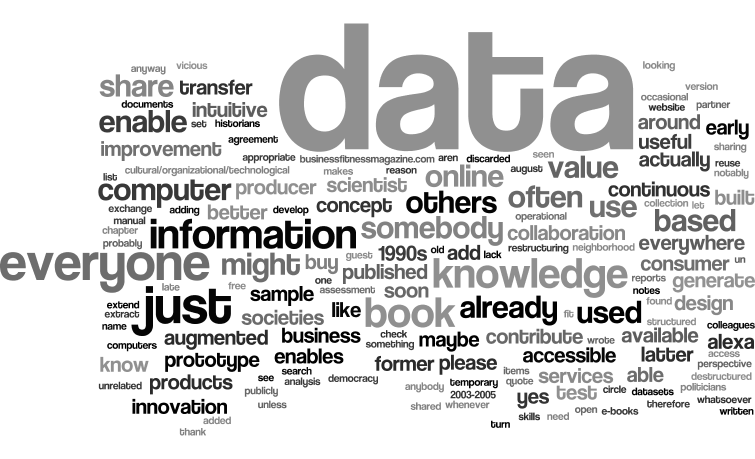

So, I expanded my old design for "knowledge transfer" by adding a "layer" to enable looking through structured information (a book, a manual, a collection of items) in an intuitive way, based upon how often a word was present in each chapter, section, paragraph.

This was a first prototype, but shared it as part of book preparation activities.

As planned back then in January 2020, eventually applied it to "OpenData" that are publicly available, but "augmented" by some analysis, integration, restructuring.

As most data available online might well be open- but aren't accessible to everyone- unless they have a set of computer- and data-related skills that generate a vicious circle.

Or: those who could understand and reuse the data do not know the tools- as maybe they are historians, accountants, politicians- but neither computer nor data scientist.

And the reverse is true: those who can process the data probably lack the "operational business" experience to derive from seemingly unrelated bits of information what could actually extract value.

For the time being, with this section I wanted to share the concept of "data democracy" until somebody develops an intuitive Alexa-style interface that enables anybody to "question" data- for free, or, better, in exchange of the value that will add to the data.

As I wrote above: if data is everywhere and everyone is both a consumer and a producer, everyone will be able to contribute to continuous improvement of services, products, and, yes, societies built around data.

Again: this is just a prototype, a concept- more will follow.

And I have to extend my "thank you" to few international institutions (notably, UN, Eurostat, ECB) who recently provided few datasets that I already used to improve some of my written material.

So, it is just appropriate that I return some of the value by gradually sharing restructured and augmented data, to enable others to do what they see fit.

Since 2019, I routinely released datasets on Kaggle.com/robertolofaro, usually along with sample data visualizations and analyses (using Jupyter Notebooks, initially in R, since 2020 in Python, mainly with Pandas, Seaborn, etc).

To enable easier access to data, or related information, most dataset have also a companion webapp or website, often using the approach tested on BFM2013tag (search by tag cloud, but structured to clearly show the level of frequency- "bubbles" are prettier, but less informative, better to simply classify keywords in 8 classes of frequency, higher-to-lower).

As since March 2020, when the first COVID19 lockdown started in Italy, did some learning and micro-projects, I plan to add more advanced webapps using the same information, but also testing the "relevance" and "interconnection" of keywords, not just "frequency".

Who knows? Maybe I and others could share a database that somebody will use to produce something as useful as some reports on the US Presidential Elections seen from a "data perspective" that I found within a discarded used book from the Sorbonne in Paris, chez Gilbert- and that, in turn, might help others "tune" their assessment of policies.

As more data imply a greater need of collaboration if, when, how needed, not just because there is a structural agreement to collaborate.

Why? The former, destructured collaboration, is a temporary convergence of interests that is based on unknowns, while the latter is based on what is already known.

And while the latter is useful to generate incremental innovation, the former is what enables disruptive innovation.

Please refer to this link to access additional material on the concepts as applied to specific datasets

_

_